Stable Diffusion for Architecture: A Free Alternative to Midjourney for AI Renderings

Stable Diffusion for architecture: a free alternative to Midjourney for your AI renderings. Confidential, local, powerful. Qualiopi-certified training.

Stable Diffusion for Architecture: A Free Alternative to Midjourney for AI Renderings

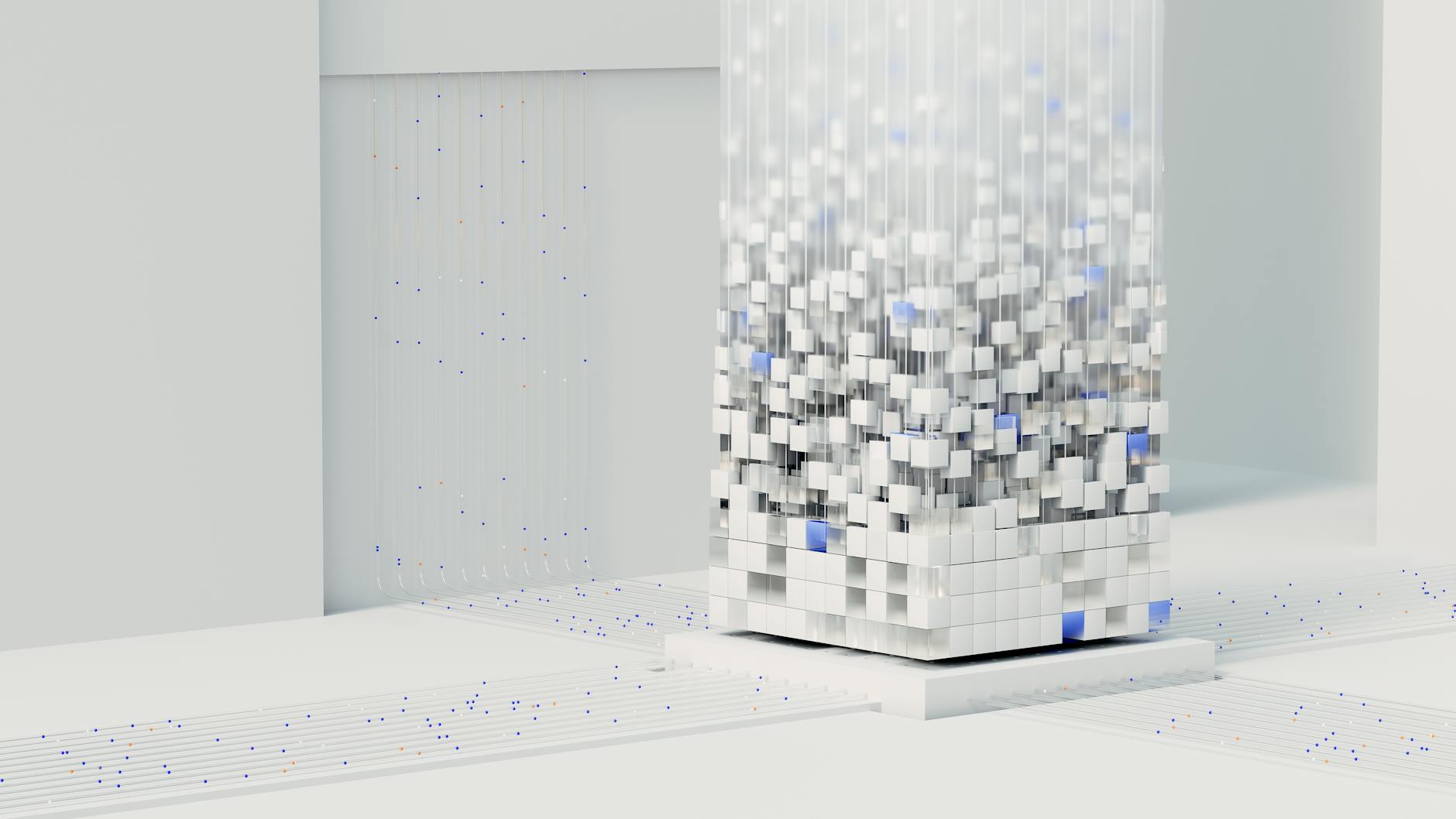

AI image generation is deeply transforming the architect and 3D visualizer profession. Among the available tools, Stable Diffusion for architecture stands as the open source reference: a free AI rendering generator, locally installable, that offers a serious alternative to Midjourney for professionals concerned about their data and budget. In this article, you will discover why more and more architecture firms adopt Stable Diffusion, how to use it effectively with ControlNet, and how to train with a Qualiopi-certified program.

Contents

- What is Stable Diffusion and why architects are interested

- Stable Diffusion vs Midjourney: comparison for architecture professionals

- ControlNet: the essential building block for precise architectural renderings

- Installation and configuration of Stable Diffusion for architecture

- Training in Stable Diffusion: Qualiopi-certified program for architects

- FAQ

What is Stable Diffusion and why architects are interested

Stable Diffusion is a latent diffusion image generation model developed by Stability AI and released as open source in 2022. Unlike Midjourney, which works exclusively via an online subscription on the publisher's servers, Stable Diffusion installs directly on your own machine. It can be freely downloaded from the official AUTOMATIC1111/stable-diffusion-webui repository on GitHub.

For an architect or 3D visualizer, this tool presents immediate interest: generating variants of atmosphere, lighting or materials on an existing plan base in seconds, without sending project data to third-party servers. It is a capacity that directly addresses the confidentiality challenges of client files, a critical point in public tenders or sensitive projects.

The community around Stable Diffusion is very active. Thousands of specialized models (called "checkpoints") are available, several of them optimized for architecture, interior design and urban visualization. Complementary tools like ControlNet, LoRA or img2img pipelines allow obtaining a level of control over the final render that Midjourney simply does not offer.

Stable Diffusion vs Midjourney: comparison for architecture professionals

Before getting started, here is a factual comparison between the two tools from the perspective of an architecture professional:

| Criterion | Stable Diffusion | Midjourney |

|---|---|---|

| Price | Free (open source) | From $10/month |

| Installation | Local (your PC or server) | Online only (Discord/web) |

| Data confidentiality | Total (nothing leaves your machine) | Data sent to US servers |

| Render control | Very high (ControlNet, masks, img2img) | Medium (prompts only) |

| Learning curve | Moderate to high | Low |

| Plan/schema compatibility | Yes (via ControlNet) | Limited |

| Model customization | Yes (checkpoints, LoRA, fine-tuning) | No |

| Visual quality | Very high (depending on model) | Very high |

| BIM/CAD workflow | Integrable (via image export) | Not natively integrable |

| Community support | Very broad (open source) | Discord community |

For architecture firms handling confidential files or wishing to integrate AI generation into a reproducible production flow, Stable Diffusion offers an unmatched level of control and data sovereignty. The tool's free nature is an additional advantage, especially for independents funding their upskilling through FIFPL.

Midjourney remains relevant for rapid and intuitive atmosphere exploration, particularly in the sketch phase. For more on this tool, see our guide on Midjourney for architecture.

ControlNet: the essential building block for precise architectural renderings

If Stable Diffusion generates images from text, ControlNet is the extension that allows controlling the geometry, structure and composition of these images from an existing plan, schema or 3D rendering.

Concretely, ControlNet analyzes your source image (an elevation, a model view, an export from software like Revit or SketchUp) and guides the model to respect your composition's lines while applying a style or atmosphere defined by a text prompt.

The most useful modes for an architect are:

- Canny: edge detection. Perfect for preserving the geometry of a facade or section.

- Depth: depth map. Maintains spatial relationships between volumes.

- Lineart: reconstruction from a line drawing. Ideal for scanned sketches.

- MLSD: straight line detection. Particularly suited to interior spaces.

- Normal Map: preserves surface and plane orientation information.

A typical workflow for a visualizer consists of exporting a view from Autodesk Revit or AutoCAD, importing it into Stable Diffusion's web interface (AUTOMATIC1111 or ComfyUI), selecting the adapted ControlNet preprocessor, then writing a prompt describing the desired atmosphere: natural light, materials, vegetation, human presence, time of day.

The result is a realistic rendering consistent with the project, in seconds, without going through a classic rendering engine like Lumion or V-Ray. Time savings are considerable: according to feedback from trained professionals, the atmosphere phase of a project can go from several hours to under 30 minutes.

Installation and configuration of Stable Diffusion for architecture

Installing Stable Diffusion requires some technical prerequisites but remains accessible to any professional who has followed adapted training.

Recommended hardware configuration:

- Nvidia GPU with at least 8 GB VRAM (RTX 3060 or higher recommended).

- 16 GB RAM minimum.

- 50 GB free disk space (for models and extensions).

- Windows 10/11 or Linux.

Installation steps (overview):

- Install Python 3.10.x and Git.

- Clone the AUTOMATIC1111 repository via Git.

- Launch the installation script for automatic dependencies.

- Download an architecture-oriented checkpoint model (Juggernaut XL, Realistic Vision, ArchiDiffusion).

- Install the ControlNet extension from the web interface.

- Download the necessary ControlNet preprocessors.

Once the environment is configured, the interface presents as a complete dashboard: text-to-image tab, img2img, inpainting, extras. Intuitive handling of this space is one of the central objectives of the AI for architects training offered by Educasium.

Recommended models for architecture:

| Model | Preferred use |

|---|---|

| Juggernaut XL | Photorealistic exterior and interior renderings |

| ArchiDiffusion | Specialized in architecture and urbanism |

| Realistic Vision | Lighting atmospheres and materials |

| DreamShaper XL | Concept art and stylized sketches |

Model management, extensions and sampling parameters (steps, CFG scale, sampler) are covered practically in the Educasium training program, with exercises directly drawn from real architectural projects.

Training in Stable Diffusion: Qualiopi-certified program for architects

Mastering Stable Diffusion in a professional architectural context is not improvised. The diversity of parameters, model management, articulation with BIM software and data confidentiality issues require structured support.

Educasium offers a training program dedicated to architecture and design professionals, built around concrete use cases and validated pedagogical progression. The training covers:

- The fundamentals of AI diffusion generation (understanding to use better).

- Installation and complete configuration of the Stable Diffusion environment.

- Writing effective architecture prompts (style, materials, light, atmosphere).

- Advanced ControlNet use with Revit, SketchUp, AutoCAD exports.

- Integration into an existing production flow (sketch, APS, APD phases).

- Managing confidentiality and intellectual property of AI renderings.

This training is 100% fundable via OPCO for employees and via FIFPL for independent architects and visualizers. It is carried out within a Qualiopi-certified organization, guaranteeing pedagogical process quality.

To discover the full program and verify your funding eligibility, contact the Educasium team.

FAQ

Is Stable Diffusion really free for professional use in architecture?

Yes. The Stable Diffusion source code is published under open source license (CreativeML Open RAIL-M). Installation via AUTOMATIC1111 WebUI is free. The only possible costs are hardware (adapted GPU) or hosting if you choose a cloud solution. Some advanced checkpoint models are offered as paid access on third-party platforms, but many high-quality models remain freely accessible.

Are my client projects safe if I use Stable Diffusion locally?

Yes. This is precisely one of the major advantages of Stable Diffusion over Midjourney or other online services: the images you import (plans, mockups, photos) never leave your machine. No data is transmitted to an external server. This guarantees total client file confidentiality, including for public tenders subject to discretion obligations.

Do you need powerful computer equipment to use Stable Diffusion in a firm?

An Nvidia GPU with at least 8 GB of VRAM (RTX 3060 or higher type graphics card) allows obtaining good performance. For intensive production use or in a firm with multiple users, a workstation with an RTX 4080 or 4090 is recommended. It is also possible to use cloud inference solutions (hosted ComfyUI, RunPod, Vast.ai) to avoid hardware investment, while maintaining data control through secure configurations.

Move to practice with a Qualiopi-certified training

Stable Diffusion transforms rendering production in architecture firms: less time on visualization phases, more added value on advice and design. But to fully benefit from it, training adapted to your professional reality is essential.

Educasium supports architects, 3D visualizers and architecture firms in mastering generative AI. Our programs are designed to integrate into your existing workflow and produce measurable results from the first weeks.

100% OPCO/FIFPL fundable training. Qualiopi-certified program. Contact Educasium to discover the program and verify your funding eligibility — reply within 24 business hours.